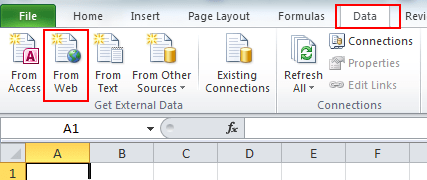

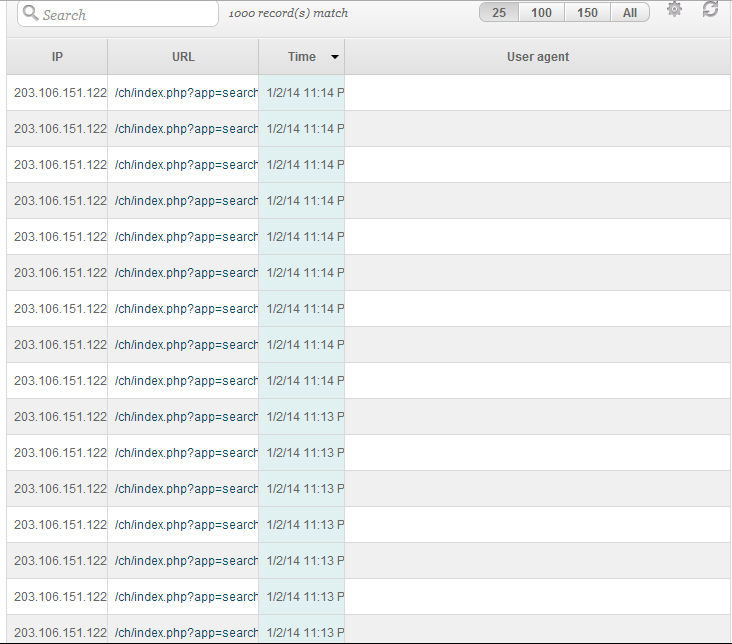

# Check that the duration cell is not emptyĭuration = () ListOfSearchTimes.append(time.encode('latin-1')) Time = tr.select('td.time').contents.strip() # Scrape the search results from the resulting table Soup = BeautifulSoup(br.response(), 'lxml', from_encoding="utf-8", parse_only=parse_only) Parse_only = SoupStrainer("table", class_="hfs_overview") # get the response from mechanize Browser # Read the result of each click and convert to response for beautiful soup formatting #Click the LATER link a given number of times times to get MORE trip times # Enter the text input (This section should be automated to read multiple text input as shown in the question)īr.form = o # Origin train station (From)īr.form = d # Destination train station (To)īr.form = x # Search Timeīr.form = '10.05.17' # Search Date # Assign origin and destination to the o d variables # Google demands a user-agent that isn't a robotīr.addheaders = [('User-agent', tries=1000, delay=3, backoff=2) Quickest connection time within given time durations """ """ This function scraped the required website and extracts the Them into an O-D for input into the web scrapper """ """ This function reads the cities data from csv file and processes Msg = "%s, Retrying in %d seconds." % (str(e), mdelay) # Delay function incase of network disconnectionĭef retry(ExceptionToCheck, tries=1000, delay=3, backoff=2, logger=None): # function to convert time string to minutes Is there any way to further enhance its performance? Thank you.įrom bs4 import BeautifulSoup, SoupStrainer However, I need to run many iterations (over 1million) which is so much time. With some help from this forum, this modified version is faster (at 4secs per iteration) than the earlier version. I have here a modified version of a web scraping code I wrote some weeks back.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed